Cloud Leak Case Study: The True Cost of One MisConfiguration

In cloud ecosystems, a single misconfiguration can trigger a cascade of losses. This white paper investigates the financial impact of a lone misconfiguration, then shows how risk-based remediation and deliberate architectural discipline reduce that cost. We translate lessons into an actionable framework for operational resilience, threat awareness, and ROI-driven security. The goal is to equip security leaders with a practical playbook to prevent costly leaks and speed incident containment. The analysis centers on infrastructure nuances, threat vectors, and the economics of security decisions.===

Cloud Leak Case Study: The True Cost of One MisConfiguration

Immediate Exposure Vectors

A misconfigured storage bucket opened a door for unauthorized access. The breach pathway often originates from weak access controls, exposed keys, or incorrect IAM policies. The result is rapid data exfiltration and opaque financial tracing. In practice, attackers blend automated scans with manual probing to identify weak exposure points. The operational cost includes incident response time, forensics, and legal obligations. As attackers move, plasticity in the environment becomes a liability. The cost is not only data loss but the reputational damage that follows. The risk is magnified in multi cloud environments where policies diverge.

A misconfiguration can invite data blackmail or encryption based extortion. The misstep creates trust erosion with customers and partners. Immediate responses must establish containment, data integrity, and chain of custody. Security teams must trace where access originated and who approved the policy. The central question is whether the organization can prove it acted swiftly with a robust playbook. Here, the cost is measured in hours of downtime and the hours spent communicating with stakeholders. The operational burden compounds as more teams join the remediation.

Root cause visibility matters. Without it, executives face uncertain post incident metrics. The first 24 hours determine whether the incident remains a contained event or grows into a systemic concern. The organization must steadily improve policy review cadence. Only then can preventive controls scale across teams and cloud accounts.

Financial Fallout and Metrics

The second wave of impact is financial. A single misconfiguration can trigger data transfer costs, API call surges, and storage read operations that escalate quickly. Cloud usage dashboards may show spikes that lag behind the breach’s arrival in the system. The expense is not only direct charges but the long tail of remediation. For example, reconstituting data sets, validating integrity, and restoring customer trust all require time and budget. The cost calculus must consider third party audits, regulatory penalties, and customer churn.

Security teams should translate breach dynamics into tangible ROI metrics. A robust model compares the cost of prevention with expected losses from a misconfiguration. In many cases the cost of prevention is a fraction of the breach cost. The model should include incident response hours, legal counsel, notification obligations, and potential fines. The goal is to show that proactive controls reduce the risk premium embedded in cloud spend. The data should drive governance decisions that balance speed with caution, and control with agility.

Quantified Exposure Snapshot

To illustrate the economics, consider a hypothetical 12 month window with a single exposure. The misconfiguration triggers data egress of 2 TB and 1 million API calls beyond normal baseline. Storage and egress charges combine for a 60,000 USD impact. Add 120 hours of incident response at 250 USD per hour and 30 hours of forensics at 300 USD per hour. Legal and customer communications can reach 40,000 USD. The total annualized cost may exceed 150,000 USD. In practice, the long term costs often surpass the near term numbers due to reputation effects and regulatory follow up.

A table beneath this section compares threat vectors and early warning signals. The table aligns exposure types with detection latency and potential losses. This helps executives understand which misconfigurations carry the highest cost risk. It also informs where to apply the most rigorous controls. The takeaway is that a single misconfiguration can ripple through multiple cost centers, not just data handling.

Architect’s Defensive Audit

This section emphasizes a structured approach to prevent similar incidents. It offers a concise audit checklist aligned with the risk you accept and the controls you deploy. The checklist focuses on identity, data, and infrastructure boundaries. It also emphasizes the need for continuous improvement and measurement.

Executive summaries should highlight what was learned and what must change. The audit should be mapped to a particular cloud account and tied to a governance plan. The aim is a mature posture that reduces the chance of repeated failures. The audit also considers how to improve the resilience of automation and the speed of remediation. Bold leadership is required to balance discipline with operational tempo.

| Area | Immediate Control | Long Term Change | Responsible Party | Time to Implement |

|---|---|---|---|---|

| Identity and Access | Enforce least privilege | Role based access reviews quarterly | SecOps | 4 weeks |

| Data Exposure | Use private storage and access logs | Data loss prevention tooling | Cloud Security | 6 weeks |

| Network Segmentation | Default deny by VPC | Perimeter and micro segmentation | Network Team | 8 weeks |

| Monitoring | Establish baseline and alerts | Anomaly detection with ML signals | SIEM/AM | 12 weeks |

Risk-Based Remediation: Insights from a Cloud Misconfiguration

The Resilience Maturity Scale

The Resilience Maturity Scale describes stages from initial ad hoc fixes to optimized, policy driven risk reduction. Stage one centers on basic policy enforcement and monitoring. Stage two introduces repeatable playbooks for containment. Stage three aligns controls with business risk thresholds. Stage four integrates risk across the enterprise, including supply chain risk. This framework helps leadership see where the organization stands and what it must do next. It also drives a coordinated budget plan so that security and operations invest the right resources at the right time.

In practice, advancing maturity reduces the window of exposure. It also improves the predictability of security outcomes. The scale supports risk based remediation by linking observed incidents to specific control gaps. It makes it clear where to invest in automation and where human intervention is essential. The framework is not a one time exercise. It requires continuous evaluation as cloud services evolve and the threat landscape changes. The goal is to achieve a steady state of low residual risk.

Prioritization Framework and ROI

A practical approach uses a risk scoring model that quantifies potential loss and probability. This helps allocate limited security budgets to the most dangerous misconfigurations first. The framework prioritizes fixes that reduce exposure for the greatest number of workloads. It also considers how quickly a misconfiguration can reemerge due to changing configurations. ROI comes from faster containment, lower data exposure, and more efficient response. The model supports decision making when we must trade speed for certainty.

The framework also factors in the cost of repeated incidents and the impact on customer confidence. It aligns remediation with business processes to minimize disruption. Prioritization must consider regulatory requirements and contractual obligations. The final aim is to allocate resources to areas with the highest risk density. This ensures resilience without stalling operational momentum.

Scenario Playbooks and Metrics

In practice, runbooks capture how teams respond to common misconfigurations. Each playbook lists triggers, owners, time to containment, and communication steps. It also documents the steps to verify data integrity after containment. The metrics track containment time and post incident remediation. Leaders use this data to refine the playbooks and update governance policies.

The playbooks support automation through scripts and configuration checks. They become part of the security operating model. The model links to reward structures that incentivize proactive risk detection. It also creates a feedback loop where experiences from incidents shape future policy.

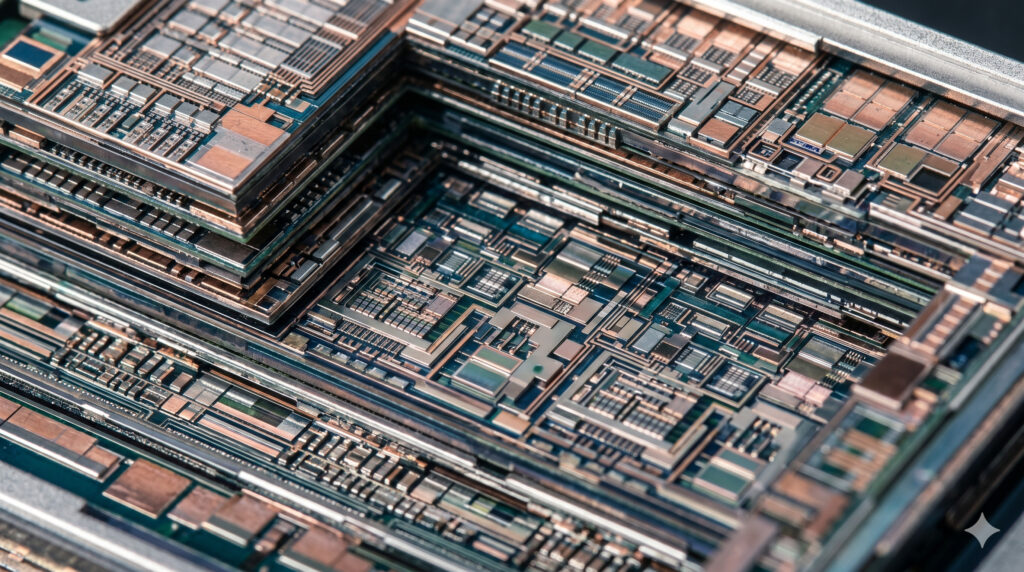

Infrastructure Nuances and Zero Trust in Practice

Network Segmentation and Lateral Movement

Zero Trust policies assume network compromise and restrict lateral movement. Segmentation reduces blast radius and limits access to sensitive resources. Each segment enforces strict authentication and continuous verification. Micro segmentation aids rapid containment when misconfigurations occur. Segmented networks also ease forensics by narrowing the scope of investigation. The key is to implement dynamic policies that respond to evolving threats.

Implementing network segmentation requires precise policy definitions. It also requires validation to ensure legitimate services remain functional. A misstep here can harm application performance or disrupt legitimate workflows. The challenge is to balance strict controls with operational agility. The best practice combines segmentation with identity based controls and continuous monitoring.

API Hardening and Cryptographic Agility

APIs open doors to misconfigurations if misused. Hardening API endpoints includes strong authentication, minimal permissions, and robust input validation. Cryptographic agility means updating algorithms and keys without downtime. It also requires secure key management and rotation policies. These measures reduce the risk of credential leakage and data exposure. The threat landscape demands that cryptography stay current with industry standards.

In practice, teams must implement automated key rotation and streamlined certificate management. They must also test API gateway policies under realistic attack simulations. The goal is to minimize the mean time to detect and mitigate API based threats. The combination of API hardening and cryptographic agility forms a core defense against data breaches and supply chain risks.

Threat Modeling and Threat Vectors in Cloud

Threat Landscape Mapping

Mapping threat vectors helps teams see where misconfigurations can cause damage. Typical vectors include data exposure, insecure defaults, misrouted data flows, and compromised credentials. A map clarifies the attacker’s opportunities and the defender’s responses. It also supports a risk aware culture within the organization.

Threat modeling should be an ongoing activity. Cloud services expand quickly, and new misconfigurations appear. The map must include third party services, supply chain partners, and internal processes. It should capture evolving attack patterns and the effectiveness of controls. The outcome is better anticipation of risk and faster decay of threats.

Attack Scenarios and Mitigations

Common scenarios illustrate how misconfigurations lead to breaches. Each scenario includes the attack path, the affected assets, and the mitigation strategy. Effective mitigations combine policy, automation, and human judgment. Attack simulations test whether teams can close gaps quickly. The narrative of each scenario informs security posture and informs budget decisions.

The goal is to render risk tangible. Executives want to understand how the costs and benefits line up. The mitigation strategy should be practical, measurable, and aligned with risk appetite. It must incorporate data governance, access control, and cryptographic practices.

The Adversarial Friction Framework

Model Overview

The Adversarial Friction Framework explains how adversaries exploit friction points. It identifies where attackers are likely to search for misconfigurations and how defenders can raise the barrier to entry. The framework emphasizes that resilience grows when friction is high at the most valuable attack stages. It also notes that friction should not degrade user experience or business operations.

The framework integrates with the risk based remediation model. Friction measurements guide where to focus hardening efforts. The approach favors proactive lock down and rapid containment of misconfigurations. It also supports leadership in making informed tradeoffs between ease of use and security.

Operationalizing Friction

Turning theory into practice requires measurable indicators. Teams monitor how long it takes to detect, contain, and remediate misconfigurations. They track the frequency of policy drift and the rate of unauthorized access attempts. They also measure the financial impact of resolved incidents.

Operationalizing friction means arming teams with simple, repeatable workflows. It demands automation to reduce human error while preserving the ability to make careful judgments. The objective is a resilient posture that can withstand the evolving threat landscape.

The Resilience Maturity Scale and ROI Metrics

Maturity Assessment and Roadmap

The maturity assessment translates a qualitative posture into a roadmap. It maps capability levels to concrete milestones. A clear path toward higher resilience includes policy discipline, automation, and continuous improvement. The objective is to reduce risk to a level that the business can tolerate.

The roadmap prioritizes actions by impact and feasibility. It aligns with financial planning, so executives can forecast the cost of security over multi-year horizons. The scale provides a shared language for security teams and business units. It helps communicate the value of resilience through credible metrics.

Return on Security Investment and Metrics

ROI for security includes avoided losses, reduced incident duration, and improved regulatory posture. It aggregates benefits from prevention into a single, comparable metric. The metrics also reveal the cost of controls and the efficiency of responses. When used properly, ROI informs decisions about automation, staffing, and cloud service choices.

Security leaders should present ROI in terms of business outcomes. They should show how investment reduces risk and mitigates the financial consequences of misconfigurations. The emphasis is on sustainable resilience and predictable business performance.

Chief Security Officer FAQ

Q1 What is the most cost effective control to prevent cloud leaks?

A1 The most cost effective control is enforcing least privilege with automated policy enforcement. It reduces unauthorized access and limits the blast radius. In practice, implement role based access controls and continuous auditing. Pair this with automated drift detection to catch changes quickly. This combination prevents common misconfigurations from becoming breaches. It also scales across multiple cloud accounts with a repeatable process. The savings come from fewer incidents and faster containment. It aligns security spending with actual risk levels and business priorities.

Q2 How do we quantify the impact of a single misconfiguration on ROI?

A2 The impact is calculated by combining direct cloud costs, incident response hours, and reputational effects. Add regulatory penalties if applicable. Compare this total to the cost of preventive controls and rapid containment. Use a formal model that assigns a probability to recurrence. The model should consider asset criticality, data sensitivity, and data volume. The ROI improves as preventive controls reduce the probability of expensive outcomes. This approach clarifies where to invest for maximum protection and fastest payback.

Q3 What framework best aligns with risk based remediation?

A3 The Resilience Maturity Scale plus a risk scoring model provides a strong alignment. The maturity scale defines capability goals across people, processes, and technology. The risk scoring model prioritizes remediation by potential loss and likelihood. When integrated, the frameworks translate risk into concrete projects, budgets, and timelines. They also enable tracking of progress and reallocation of resources as the threat landscape shifts. The combination helps leadership balance operational velocity with robust security controls. It is a practical and scalable approach.

Q4 How can we reduce data exposure without harming business agility?

A4 Implement data classification and dynamic access controls. Enforce network segmentation and private data flows. Use encryption in transit and at rest with key rotation. Build automated checks for misconfigurations before deployment. Introduce policy as code reviewed by both security and product teams. The goal is to minimize risk while preserving speed. Agile teams gain confidence when controls are visible, testable, and reversible. The result is a more secure, responsive environment.

Q5 How do we measure success after remediation?

A5 Use a scorecard that tracks detection latency, containment time, and policy drift. Include data loss incidents, mean time to recovery, and user impact. Compare post remediation metrics to baseline values before the incident. Regularly audit access controls and API configurations. The success metric is lower exposure probability, faster containment, and less data loss. It also includes stakeholder confidence. A transparent dashboard communicates resilience progress to executives.

Q6 What role does cryptographic agility play in preventing leaks?

A6 Cryptographic agility ensures you can upgrade algorithms and keys without downtime. It reduces exposure during algorithm transition periods. Implement automated key management with rotation schedules and secure storage. Ensure API gateways enforce current cryptographic policies and reject deprecated ciphers. Regularly test cryptographic changes in staging and blast the changes into production with minimal risk. The approach minimizes risk from stale cryptography. It also demonstrates a proactive security posture to customers and regulators.

Q7 How should we handle third party dependencies?

A7 Treat third party services as part of your attack surface. Implement vendor risk management with continuous monitoring and contractual controls. Require security attestations and evidence of secure coding practices. Use API management to enforce consistent security policies across partners. Regularly review service level agreements for security obligations. A robust program prevents supply chain misconfigurations from becoming outages or leaks. It also helps build trust with customers who rely on your ecosystem.

Q8 What is the path to operational resilience in practice?

A8 Build a unified security program that combines policy, automation, and people. Align cloud governance with business goals and regulatory requirements. Establish a measurable security posture with ongoing risk assessments. Run frequent tabletop exercises and real world simulations. The payoff is a resilient organization that can endure incidents and recover quickly. Leaders who implement these steps reduce losses, maintain continuity, and sustain trust in the brand.

This paper demonstrates that the cost of a single misconfiguration extends beyond immediate data exposure. A disciplined, risk based remediation approach translates threat awareness into measurable resilience and financial prudence. By combining a maturity framework with practical audit checklists and a clear ROI lens, organizations can prevent leaks, contain incidents swiftly, and protect both value and reputation in a volatile threat landscape.===

Meta description: A rigorous, bias free white paper on the true cost of one cloud misconfiguration, with a risk based remediation framework and actionable ROI metrics.

SEO tags: cloud security, risk remediation, misconfiguration, resilience, zero trust, ROI, data protection